TL;DR:

- Regulated industries need structured AI education workflows to balance compliance and innovation.

- Using frameworks like NIST AI RMF and WEF Playbook helps build accountable, measurable AI literacy.

- Embedding continuous, role-specific training into daily workflows ensures compliance and strategic growth.

Regulated industries face a sharp tension: AI deployment moves fast, but compliance cannot be rushed. Organizations that skip structured AI education workflows risk both regulatory penalties and strategic drift. The NIST AI RMF's four core functions — Govern, Map, Measure, and Manage — provide a proven foundation for building compliant AI programs. This guide walks corporate leaders through every stage of designing, executing, and measuring an AI education workflow that delivers real business value while keeping regulators satisfied.

Table of Contents

- Understanding AI education workflow challenges in regulated industries

- Defining workflow objectives and required competencies

- Selecting tools and resources for effective AI education

- Building and executing your AI education workflow

- Measuring success and continuous improvement

- Perspective: Why most AI education fails in regulated environments and how to succeed

- Accelerate your AI education workflow with expert guidance

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Workflow drives compliance | Structured AI education workflows help regulated organizations stay compliant and prepared. |

| Balance governance with speed | Overly rigid controls slow innovation while too little governance puts compliance at risk. |

| Continuous improvement is essential | Regularly measuring and updating workflows ensures enduring business and regulatory value. |

| Choose resources strategically | Selecting formats and materials matched to compliance needs boosts engagement and outcomes. |

Understanding AI education workflow challenges in regulated industries

Regulated industries do not get the luxury of trial and error. A misstep in financial services, healthcare, or energy can trigger audits, fines, or public trust failures. Yet AI technology evolves faster than most compliance teams can track. That gap between technology speed and regulatory readiness is where most organizations struggle.

The most common pain points corporate leaders report include:

- Regulatory uncertainty: Standards like NIST AI RMF, EU AI Act, and sector-specific rules shift frequently, making it hard to anchor training content.

- Staff skill gaps: Technical teams understand AI models, but operational and legal staff often lack the vocabulary to assess risk or apply governance rules.

- Rapid technology shifts: New AI tools enter the market monthly, and existing training materials become outdated quickly.

- Siloed learning: Education happens in isolated departments rather than across functions, creating inconsistent risk awareness.

One structural problem stands out above the rest: the governance-velocity tradeoff. Move too slowly with controls and your competitors outpace you. Move too fast and you expose the organization to serious compliance risk. As noted in the AI Governance Checklist, over-governance reduces innovation while under-governance increases risk, and balance is essential.

"The goal is not zero risk. The goal is managed risk at a pace that supports strategic growth."

A structured AI education workflow directly addresses this tradeoff. It creates a repeatable process for keeping staff current, embedding compliance checks into learning cycles, and giving leadership a clear audit trail. Germán León's AI teaching and trust resources explore how this structure builds organizational confidence over time. Without a workflow, education becomes reactive. With one, it becomes a strategic asset.

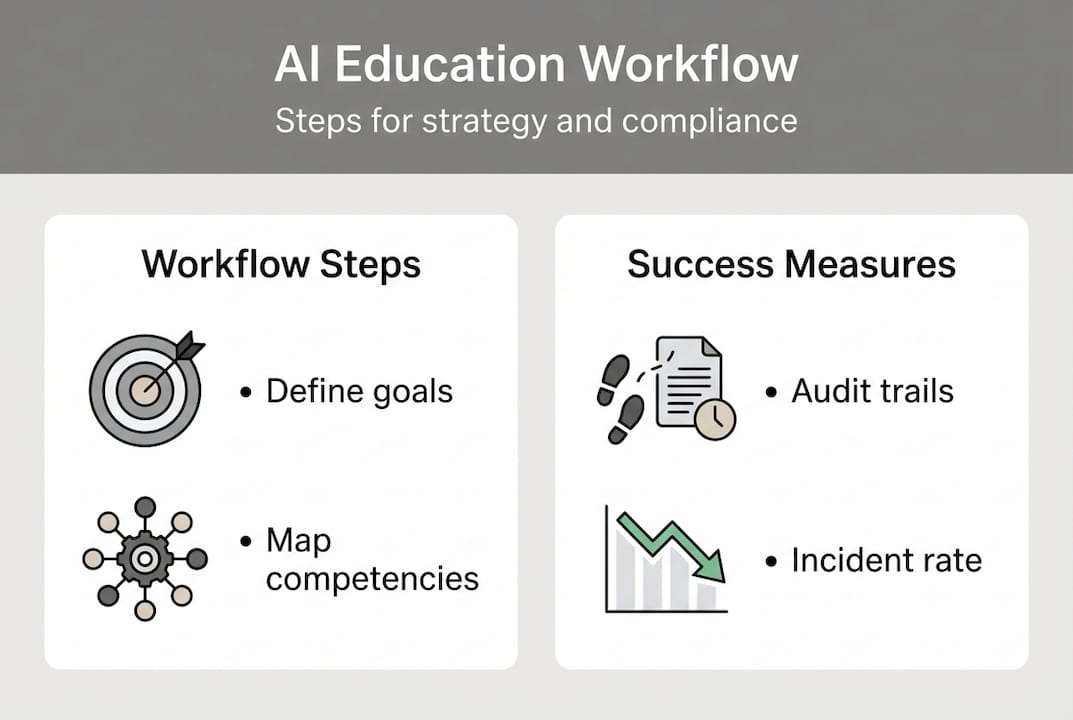

Defining workflow objectives and required competencies

With challenges scoped, the next step is to set clear objectives and define what skills your organization requires. Vague goals produce vague results. Your AI education workflow needs specific compliance targets and measurable competency outcomes.

Start by mapping your compliance goals to a recognized framework. The NIST AI RMF organizes responsible AI around four functions: Govern, Map, Measure, and Manage. Each function corresponds to a set of workforce behaviors. For example, "Govern" requires staff to understand AI policies and accountability structures. "Map" requires teams to identify AI use cases and associated risks.

The WEF Playbook's Play 9 emphasizes the need for responsible AI literacy across all organizational levels, not just technical teams. This is a critical insight. Literacy must be tiered by role.

Required competencies typically fall into three categories:

- Technical: Understanding model behavior, data quality, bias detection, and system limitations.

- Operational: Applying AI outputs in workflows, escalating anomalies, and documenting decisions.

- Ethical: Recognizing fairness issues, privacy obligations, and accountability requirements.

| Competency area | Target audience | Compliance link |

|---|---|---|

| Technical AI literacy | Data and engineering teams | NIST AI RMF: Map, Measure |

| Operational AI use | Business unit managers | NIST AI RMF: Manage |

| Ethical AI judgment | All staff, legal, compliance | NIST AI RMF: Govern |

| Strategic AI governance | C-suite and board | EU AI Act, sector rules |

Objectives should connect directly to business value. For example, reducing AI-related incident response time by 30% is both a compliance metric and an operational efficiency gain. Germán León's work on responsible AI use shows how linking education to measurable outcomes keeps programs funded and prioritized.

Pro Tip: Write each workflow objective as a testable statement. Instead of "improve AI awareness," write "100% of operational staff can identify a high-risk AI decision and escalate it within one business day."

Selecting tools and resources for effective AI education

Once objectives are set, picking the right tools and resources is critical for training efficiency and success. The format of your training matters as much as the content. Different roles require different delivery methods.

| Training format | Best for | Compliance strength | Scalability |

|---|---|---|---|

| Self-paced e-learning | Large, distributed teams | Medium | High |

| Live seminars or workshops | Leadership and cross-functional groups | High | Low |

| Vendor-led certification | Technical staff | High | Medium |

| Hybrid programs | All levels | Very high | High |

Compliance-oriented training materials must meet several standards. They should reference current regulatory frameworks, include scenario-based assessments, and generate completion records for audits. Materials that lack audit trails are a liability, not an asset.

Key characteristics to look for in any AI education resource:

- Clear alignment with NIST AI RMF or equivalent frameworks

- Role-specific content paths, not one-size-fits-all modules

- Built-in assessment and certification tracking

- Regular content updates tied to regulatory changes

- Support for multiple languages if operating globally

The WEF Playbook in practice demonstrates how organizations can apply recognized frameworks to real AI deployment scenarios. Hybrid approaches work best at scale. A combination of self-paced modules for foundational knowledge and live sessions for applied judgment creates depth without overwhelming staff schedules.

Pro Tip: Audit your current training library against your compliance framework before buying new tools. You may already have 60% of what you need. Fill gaps strategically rather than replacing everything.

Reviewing AI platform effectiveness case studies can help you benchmark your tool selection against what has worked in similar regulated environments.

Building and executing your AI education workflow

With tools selected, here is how to construct and execute your workflow. A structured rollout prevents the most common failure mode: launching training without governance infrastructure in place.

- Establish governance structure. Assign a workflow owner, define roles for content review, and set a compliance sign-off process before any training goes live.

- Map learning paths by role. Use your competency table to assign specific modules to each staff segment. Avoid blanket rollouts.

- Integrate compliance checkpoints. Build regulatory review gates into each training stage. Do not treat compliance as a final step.

- Launch in phases. Start with leadership, then cascade to operational teams. This builds top-down accountability.

- Collect feedback at each phase. Use short surveys and structured debriefs to capture what is working and what is not.

- Run iterative improvement cycles. Schedule quarterly content reviews tied to regulatory updates and internal incident data.

The NIST AI RMF core functions are the foundation for trustworthy workflow implementation. Each step in your rollout should map back to at least one of the four functions.

"Compliance is not a destination. It is a continuous process embedded in how your people learn and work."

Iterative cycles are not optional. Regulations change. AI systems evolve. Staff turn over. A workflow without a built-in refresh cycle becomes outdated within 12 months. Resources on trusted AI deployment provide practical models for keeping workflows current without rebuilding them from scratch each year.

Measuring success and continuous improvement

Effective workflows require measurement and ongoing refinement. Without data, you cannot prove compliance or justify investment. Measurement must be built into the workflow from day one, not added as an afterthought.

| Metric | What it measures | Measurement method |

|---|---|---|

| Completion rate | Participation and reach | LMS reporting |

| Assessment scores | Knowledge retention | Pre/post tests |

| Incident response time | Operational skill application | Incident logs |

| Compliance audit results | Regulatory alignment | External audits |

| Skill application rate | Behavior change | Manager observation |

Feedback loops are the engine of continuous improvement. Use three primary sources:

- Staff surveys: Capture perceived relevance, clarity, and confidence after each module.

- Compliance audits: Identify gaps between training content and actual regulatory requirements.

- Incident response data: Track whether trained behaviors reduce AI-related errors or escalation failures.

Recalibrating your workflow based on this data is not a sign of weakness. It is a sign of a mature program. Balancing governance with velocity requires tiered risk measurement frameworks like AGEI (AI Governance Effectiveness Index), which helps organizations prioritize where to invest improvement effort.

Connect measurement results to business outcomes. If your AI education workflow reduces compliance incidents by 25%, that figure belongs in your board report. Linking education metrics to AI strategy insights shows leadership that training is a strategic investment, not a cost center.

Perspective: Why most AI education fails in regulated environments and how to succeed

Most AI education programs in regulated industries fail for one reason: they are designed to satisfy auditors, not to change behavior. A one-hour annual training module with a multiple-choice quiz at the end checks a box. It does not build judgment, and it does not prepare staff for real AI risk scenarios.

The deeper problem is cultural. Organizations treat AI literacy as a compliance obligation rather than a strategic capability. When education is owned exclusively by the legal or compliance department, it loses relevance for the people who actually use AI tools daily.

Cross-functional buy-in changes this. When business unit leaders co-design training content, when technical teams validate scenarios, and when executives model AI accountability, education becomes part of how the organization operates. It stops being a once-a-year event and starts being a continuous practice.

The organizations that succeed embed AI learning into existing workflows. They connect training to real decisions, real tools, and real consequences. Germán León's AI education strategies show how this integration creates programs that staff actually use and that regulators actually respect. The shift from compliance training to strategic capability building is not a small adjustment. It is a fundamental redesign of how your organization treats knowledge.

Accelerate your AI education workflow with expert guidance

Building a compliant, effective AI education workflow is complex work. Getting it right requires both regulatory knowledge and practical experience with how AI systems behave in real organizational settings.

Germán León works with corporate leaders in regulated industries to design and execute AI education programs that meet compliance standards and drive strategic value. His AI education support services include executive briefings, custom training program design, and governance framework alignment. Real-world AI case studies and applied projects such as automotive AI solutions demonstrate what structured AI education looks like in practice. If your organization is ready to move beyond checkbox training, expert support is available.

Frequently asked questions

What is an AI education workflow?

An AI education workflow is a structured process for teaching staff AI skills and responsible practices aligned with compliance and strategic goals. NIST AI RMF and WEF Playbook offer leading frameworks for building these workflows in regulated environments.

Why is workflow structure important for AI compliance?

A workflow ensures all staff reach consistent knowledge standards and generates the audit trails that show regulators proactive risk management. The NIST AI RMF is specifically referenced for trustworthy and auditable AI practices.

Which frameworks are best for regulated industries?

NIST AI RMF and the WEF Responsible AI Playbook are widely recognized in regulated sectors. The NIST AI RMF is the leading framework for compliant AI implementation across industries.

How can organizations measure the impact of AI education?

Organizations can track compliance rates, participation, and practical skill application using regular audits and structured improvement cycles. Structured risk tiering frameworks help balance compliance measurement with operational velocity.

What common pitfalls should leaders avoid?

Avoid checkbox training that satisfies auditors but does not change behavior. Successful workflows embed AI education into daily practice and build an adaptive learning culture across all functions.